With jumps to the limiting linear model (LLM). tgp Bayesian nonstationary, semiparametric nonlinear regression and design by treed Gaussian processes.stadmod Various statistical modeling functions including growth curve comparisons, limiting dilutionĪnalysis, mixed linear models, heteroscedastic regression, Tweedie family generalized linear models, the inverse-Gaussian.The details of the method are explained in Sch\"afer and Strimmer (2005) and Separate shrinkage for variances and correlations. corpcorThis package implements a James-Stein-type shrinkage estimator for the covariance matrix, with.MASSFunctions and datasets to support Venables and Ripley, 'Modern Applied Statistics with S' (4th.Vector machines, shortest path computation, bagged clustering, naive Bayes classifier. e1071 Functions for latent class analysis, short time Fourier transform, fuzzy clustering, support.The packages that need to be installed and theirs descriptions are listed below:

The tuning parameters can be found using either a fixed grid or a interval search. The smoothly clipped absolute deviation (SCAD), 'L1-norm', 'Elastic Net' ('L1-norm' and 'L2-norm') and 'Elastic SCAD'(SCAD and 'L2-norm') penalties are available.

This package provides feature selection SVM using penalty functions. The package described in this section is penalizedSVM. This absolute maximum of the SCAD penalty, which is independent from the input data, decreases the possible bias for estimating large coefficients. For large coefficients, however, the SCAD applies a constant penalty, in contrast to the L1 penalty, which increases linearly as the coefficient increases. pλ (w) corresponds to a quadratic spline function with knots at λ and aλ.įor small coefficients, the SCAD has the same behavior as the L1. With tuning parameters a > 2 (in the package, a = 3.7) and λ > 0. So, the example belongs to the dichotomy if f ( x t e s t ) > 0. The output for an example from the test set x t e s t would be y t e s t = s i g n.

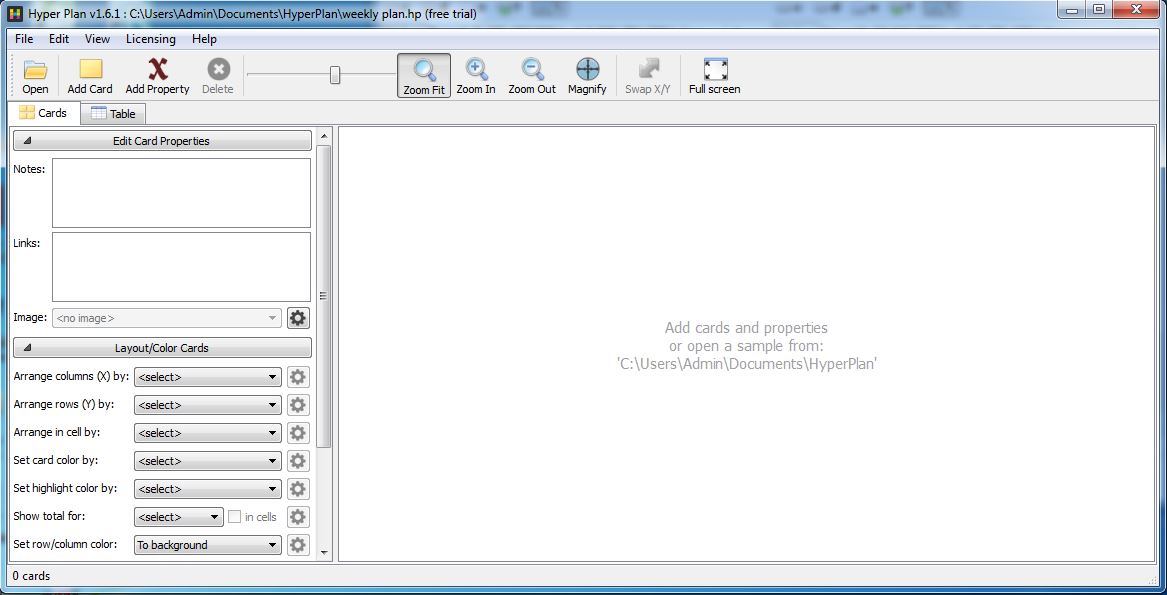

, w d ) are the coefficients of the hyper-plan and b denotes the in- tercept of the hyperplane. The SVM divides the space by a linear boundary: f ( x ) = ∑ j = 1 d w j h j + b where w = ( w 1. , ( x n, y n ), where x i is a d -tuple of the d input parameters i, and y i ∈ − 1, 1 where y i = 1 means i belongs to the dichotomy, and y i = − 1, the opposite. Technique/Algorithm Algorithm Given a training dataset ( x 1, y 1 ). The technique is implemented on the R-package called penalized SVM, that has smoothly clipped absolute deviation (SCAD), 'L1-norm', 'Elastic Net' ('L1-norm' and'L2-norm') and 'Elastic SCAD' (SCAD and 'L2-norm') as available penalties. The technique described here is a variation of the standard SVM using penalty functions. New examples are then mapped into that space so we can predict the categories they belong, based on the side of the gap it falls on. Representing the examples in a d-dimension space, the model built by the algorithm is a hyper-plane that separates the examples belonging to the dichotomy from the ones that aren't. Given a set of training examples, an SVM algorithm builds a model that predicts what are the categories of the test set's examples. The test set contains the examples that should have their classes predicted. The difference between the training and the test set is that, on the training the examples' classes are known beforehand. On Machine Learning-based algorithms such as SVM, the input data has to be separated on two sets: a training setĪnd a test set. Because of this characteristic, SVM is a called a non-probabilistic binary linear classifier. That's most common use the algorithm to predict if the input belongs to certain dichotomy, or not. The standard SVM implementation SVM takes a input dataset and, for each given input, predicts which of two possible classes the input set belongs to. The most well known SVM algorithm was created by Vladimir Vapnik. There are a lot of implemented techniques, but we may point SVM (Support Vector Machine) as one of the most powerful, especially in high-dimension data. Classifiers are some of the most common data analysis tools.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed